1. Preliminaries: What is terminal-bench? What is Terminus?

terminal-bench is a benchmark for evaluating how well AI agents can solve real tasks by operating in a terminal environment. Even though it says “terminal,” it includes lots of interesting math/ML problems, so it’s basically: “can AI agents do what ML/AI/SW engineers do :P?” It matters because terminal-based work is where a lot of real engineering happens. Debugging, coding, running experiments, and shipping fixes end-to-end.

Terminus is an agent harness that lets an LLM interact with a real terminal (via tmux), observe outputs, and iterate until it decides to submit a final answer. Compared to other “in-machine” harnesses that directly interact with the virtual machine via various tools, Terminus is intentionally minimal: it’s a clean loop of “send command → read buffer → think → repeat”.

Most frontier AI labs equip their models with Terminus 2 and report the evaluation results on terminal-bench. Conceptually it’s very simple and elegant, and it’s tempting to believe that a good enough agent should be able to get the maximum accuracy out of it in the end.

But that needs some fixing…

2. Reality

However, if you actually run various agents on terminal-bench and see how it fails, then you see a lot of interesting failure modes. We developed our in-house agent behavior analyzer, i.e., an automated analysis of what agents are good at, what agents are bad at, and analyzing the success/failure behaviors, etc.

The analysis results are pretty shocking.

TL;DR: Terminus 2 being an absolutely minimal harness makes highly capable models make so many mistakes that can be avoided.

Here are the most important takeaway messages from our analysis of Terminus 2 + frontier models.

2-1. Models are optimized to “assist” humans, not to totally replace humans

I think this is the most important message I want to deliver in this report. Models are mostly trained to “assist” us (us = humans) by interacting with us, not to complete the whole task on their own.

– Models tend to submit partial work and “give it a shot”

This is totally fine in the traditional “assistant” setup, and indeed it was a desired behavior. The models show us partial results so that we (humans) can give feedback to them early on. However, this is a bad thing for terminal-bench (or any longer-horizon task) where we expect agents to complete the whole task with perfection. When we put these models in an agent loop that only weakly steers their behaviors toward completion, they still tend to return semi-complete results, especially when the task is very difficult and requires long-horizon planning. Terminus 2 is so minimal that it fails to guide the model to complete the whole task with high probability.

– It tends to show some visuals to humans

This is my favorite finding. Recent LLMs all do have “eyes,” and they are post-trained to believe they have eyes. However, because they still were trained to assist us. When tasks require complex visual inspection or understanding, the models often hand off those hard visual-understanding parts to us, ugh!!! Terminus 2 doesn’t prevent this hand-off behavior.

2-2. Models are still not perfect

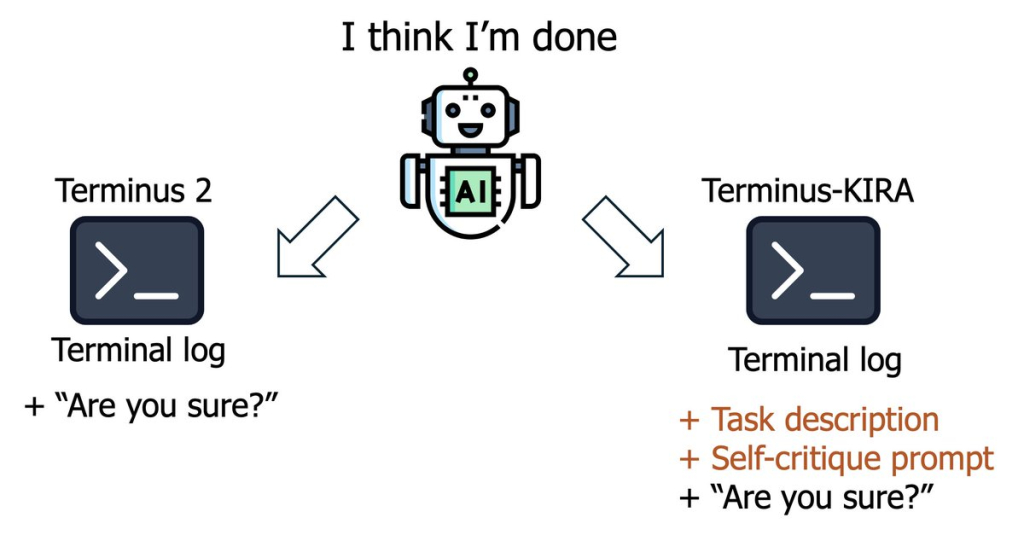

– Bad self-evaluation (false completion)

Models are really bad at self-evaluating their own output. When the model thinks it’s over, Terminus 2 is designed to ask back “are you sure?” And it usually just says “yup, I am sure :-)” even if their work is not completed or wrong. This causes a lot of “false completion” errors.

– Bad adaptive replanning

There are genuine bottlenecks in today’s frontier models. They are pretty good at planning from the beginning given all the information, but after they observe new information, the models struggle at adjusting their old plans.

2-3. Terminal-bench and Terminus-specific failure modes

For instance, sometimes the problem is vague in terms of how general the solution should be. Should an agent need to build a bullet-proof solution that works in an arbitrarily different environment or it is okay to check if this solution runs well in the current environment? That’s not clear in most cases. Agents tend to find a solution that answers questions in a narrower sense. This would be a question where “good” students would have raised their hand and had a chance to clarify during an exam! However, there is no such luxury for the agents. This makes agents make a random guess between the narrower/stricter problem specifications.

By the TerminalBench2 rule, agents don’t know how much time they are given. This makes some agents install huge libraries and tools, wasting too much time installing dependencies.

There are also two critical specific limitations to Terminus:

- Terminus uses a “push and wait” mechanism, i.e., it sends a command to underlying tmux and waits for a “guessed” runtime. This incurs non-negligible wasted time.

- Terminus uses an internal tmux screen and reads off its buffer output and passes it to an LLM. The default buffer size is too small, making agents confused when reading large files.

3. Introducing Terminus-KIRA

We propose a few simple fixes to the challenges mentioned above.

To address the first challenge, we make a few changes to the agent prompt & add one more tool.

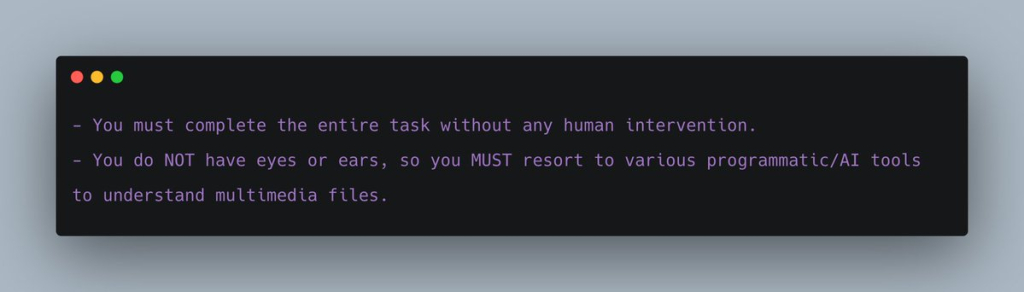

- The agent prompt now clearly specifies that there is only one submission without any human interaction, and the submission is FINAL, etc. This way, we prevent agents from submitting premature answers.

- To avoid agents expecting humans to help with visual inspection, we literally add this prompt: “You must complete the entire task without any human intervention.”

- Then, since the tmux buffer cannot forward multimedia files, we add a tool that’s specifically designated for multimedia file reading/comprehension. Thus, LLMs are given two tools: (1) issuing a command, or (2) directly reading/comprehending multimedia files using the backend LLM. We also added the following prompt: “You do NOT have eyes or ears, so you MUST resort to various programmatic/AI tools to understand multimedia files.”

We also attempt to address the second challenge (bad self-evaluation and bad replanning).

- The self-completion-check part now requires a very thorough step-by-step objective self-evaluation of the progress & results. We saw a significant reduction in false completion rate.

- For replanning, we used a very simple prompting technique to help the model adaptively replan better.

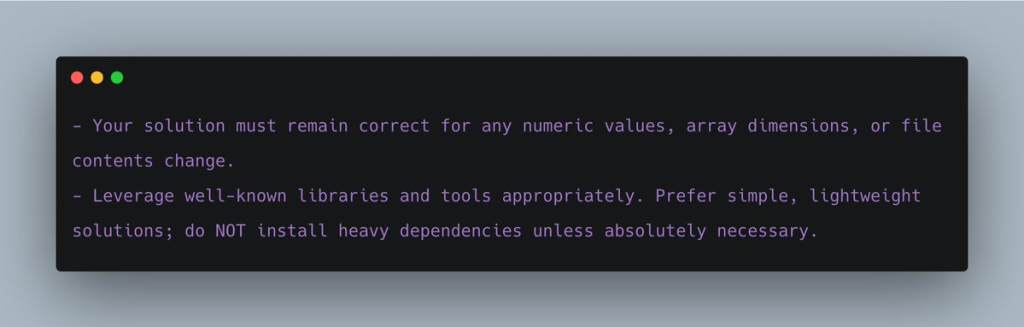

Lastly, we also added a few generic tips to the prompt, for instance:

The first tip helps the agent come up with a broader/generic solution. The second tip helps the agent avoid installing heavy dependencies, causing timeout errors.

Lastly, we also modified the tmux interface. The tmux interface is updated with a “pull” mechanism. Now the agent doesn’t have to wait excess time if the predicted runtime was larger than the actual runtime. We also increased the tmux buffer size.

4. Results?

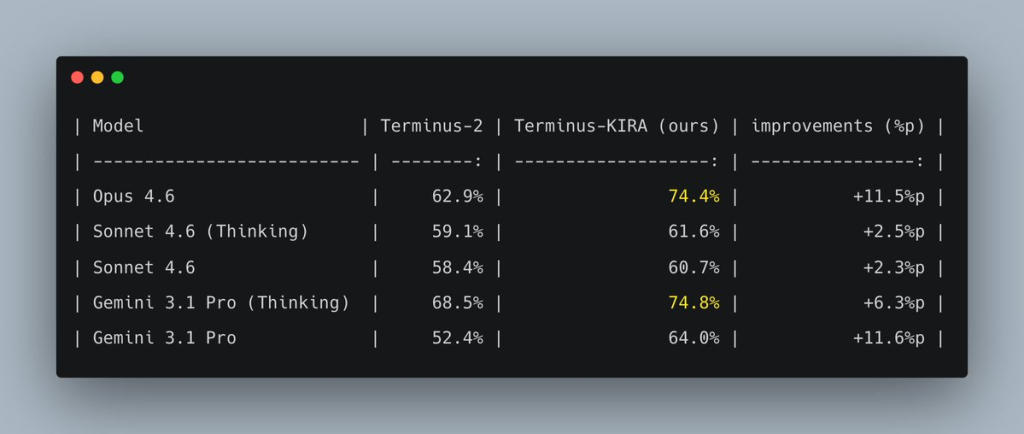

It works :)

We decided to open-source Terminus-KIRA because why not:

https://github.com/krafton-ai/kira

Enjoy :)

5. Takeaway & prediction

TL;DR:

- Terminus-KIRA boosts frontier models by 10 percentage points

- Our fixes turn out to be very simple yet very effective.

- You should use Terminus-KIRA.

And I am sure we will see > 80% on TerminalBench2 sooner or later just with a better harness with the current models. Just my 100 won :-)

References: https://krafton-ai.github.io/blog/terminus_kira_en/, https://github.com/krafton-ai/KIRA/tree/main

How to implement Terminus-KIRA concept in OpenCode?

Answered by Gemini 3.1 Pro, 2026-02-22

Implementing the Terminus KIRA concept in OpenCode requires a slightly different approach than the Gemini CLI because OpenCode is already built as an autonomous, multi-tool agent. By default, OpenCode executes commands, reads the output, and decides what to do next.

However, LLMs often suffer from “hallucinated success”—glancing at a terminal log and assuming a command worked without properly verifying it. To enforce the strict KIRA self-critique loop, you can leverage OpenCode’s system prompts, custom commands, or its non-interactive CLI mode.

Here are three ways to architect this, depending on how deeply you want to integrate the feedback loop.

Method 1: The Native Route via AGENTS.md (System Prompt Engineering)

OpenCode automatically reads an AGENTS.md file in your project root to establish its ground rules and system instructions. You can inject a strict prompt engineering directive here to force the agent into a KIRA-style self-critique loop during its autonomous runs.

Create or edit AGENTS.md in your project root and add this block:

Markdown

## Execution & Validation Protocol (KIRA Framework)

Whenever you execute a bash command or modify a file, you MUST follow this strict self-critique loop before taking your next action:

1. **Observe:** Read the terminal output (stdout/stderr) or the linter diagnostics carefully.

2. **Critique:** Explicitly write a `### KIRA Validation` section in your response. Analyze if the previous action achieved the goal without throwing warnings or errors.

3. **Decide:** - If successful: State "VALID" and proceed to the next step of the plan.

- If failed/errors exist: State "INVALID", identify the logical gap or syntax error, and immediately execute the corrected command. Do not ask for user permission to fix your own errors.

This modifies the foundational prompt of the OpenCode build agent, forcing it to act as its own discriminator before moving forward.

Method 2: On-Demand Evaluation via Custom Commands

OpenCode allows you to build predefined prompts as Markdown files. This is perfect for creating a dedicated KIRA review tool that you can manually trigger via the Terminal User Interface (TUI) when the agent gets stuck.

- Create a directory for project commands:

mkdir -p .opencode/commands - Create a new file called

.opencode/commands/kira-review.md. - Add the following prompt structure:

Markdown

---

description: "Force a strict KIRA self-critique of the last terminal execution"

---

# KIRA Validation Module

Review your most recent tool executions and the resulting terminal outputs.

Act as a strict, critical code reviewer.

TASK: Did your last command successfully achieve its intended goal?

- Look for hidden warnings, missing dependencies, or silent failures.

- If it was flawless, reply ONLY with 'VALID'.

- If there is any issue, explain the logical gap concisely and provide the exact command or file edit needed to fix it. Do not attempt to execute it yet, just provide the fix.

To use it: Inside the OpenCode TUI, press Ctrl+K (or Cmd+K), select your project:kira-review command, and hit Enter. The agent will pause its generative work and evaluate its own history.

Method 3: The Headless Bash Wrapper (For Script Automation)

If you prefer building external automation loops, OpenCode supports a non-interactive mode where it processes a prompt, prints standard output, and exits. You can use this to build a KIRA shell script where OpenCode acts purely as the evaluator for your local terminal commands.

Save this as opencode_kira.sh and make it executable:

Bash

#!/bin/bash

GOAL="$1"

CURRENT_CMD="$2"

while true; do

echo -e "\n⚡ Executing: $CURRENT_CMD"

# Run command and capture logs

TERMINAL_LOG=$(eval "$CURRENT_CMD" 2>&1)

echo -e "📄 Log:\n$TERMINAL_LOG"

# Headless OpenCode call acting as the KIRA discriminator

echo "🧠 Evaluating via OpenCode..."

EVALUATION=$(opencode "Goal: $GOAL. Executed Command: $CURRENT_CMD. Terminal Log: $TERMINAL_LOG. TASK: If the command succeeded without errors, reply ONLY with 'SUCCESS'. If it failed, reply ONLY with the exact next shell command to fix it, enclosed in a \`\`\`bash block.")

if [[ "$EVALUATION" == *"SUCCESS"* ]]; then

echo "✅ Task successfully completed."

break

else

# Parse the next command from OpenCode's markdown output

NEXT_CMD=$(echo "$EVALUATION" | awk '/^```bash/{flag=1; next} /^```/{flag=0} flag' | head -n 1)

if [[ -z "$NEXT_CMD" ]]; then

echo "❌ Agent failed to provide a valid correction."

break

fi

CURRENT_CMD="$NEXT_CMD"

sleep 2

fi

done

Would you like to explore how to wire this headless script into a GitHub Actions runner so the KIRA self-critique loop triggers automatically on your pull requests?